Outline

- AI systems retrieve brand data from three surfaces

- Crawled web content builds topical authority

- Feeds and APIs provide structured, machine-readable data

- Live sites enable agentic AI interaction

- Consistency across surfaces increases citation likelihood

- Multi-surface optimisation improves Share of Model

- RAG triangulation drives AI confidence scores

- B2B brands must optimise beyond traditional SEO

Key Takeaways

- Three data surfaces determine your AI visibility

- Traditional SEO only optimises one of three surfaces

- Structured feeds with timestamps signal data freshness

- Agentic AI navigates websites like human users

- Information triangulation builds AI citation confidence

- Cross-surface contradictions degrade trust scores

- Schema markup enables accurate AI responses

- CiteCompass monitors all three surfaces comprehensively

Introduction

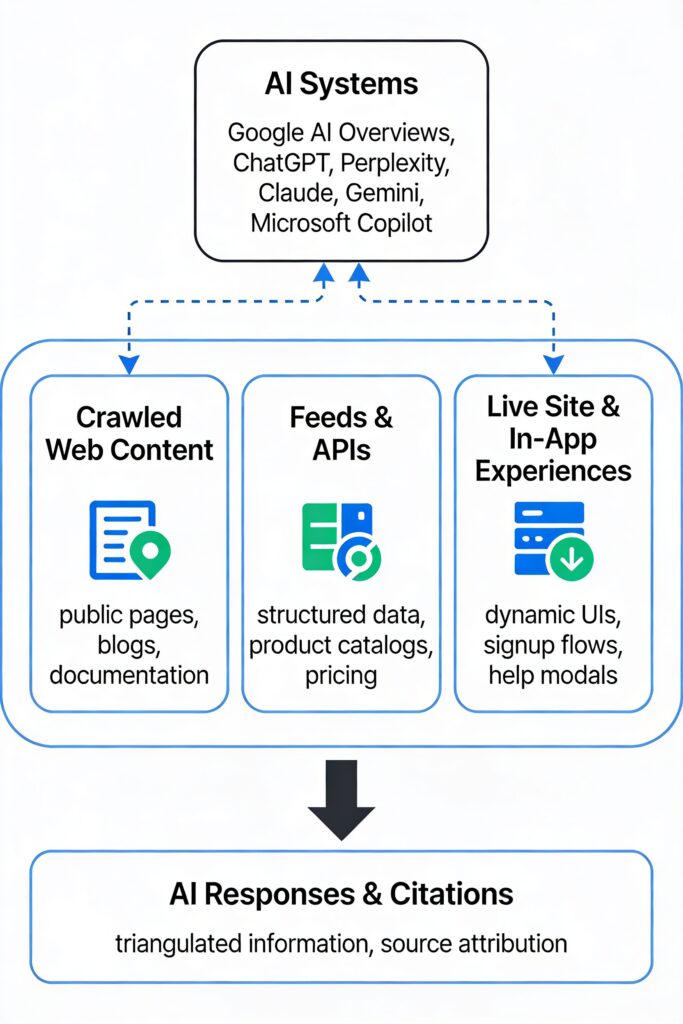

When buyers use AI platforms such as ChatGPT, Gemini, Claude, or Perplexity to research solutions, these systems do not rely on a single source. They pull information from three distinct locations known as AI Data Surfaces. Understanding these surfaces is critical because AI systems build confidence through triangulation – when they find consistent information across multiple surfaces, they are far more likely to cite your brand.

Microsoft Advertising’s 2024 report From Discovery to Influence: A Guide to AEO and GEO identifies these three data surfaces as the primary mechanism through which AI systems build understanding of brands. For B2B companies – whether selling software, professional services, manufacturing equipment, or industrial supplies – optimising all three surfaces is now essential for maintaining AI visibility and Citation Authority.

What Are the Three AI Data Surfaces?

The three-surface framework, established by Microsoft Advertising’s AEO and GEO guide (2024), categorises how AI systems access brand information into three layers.

Surface 1: Crawled Web Content

This includes all public pages indexed by search engines and AI systems – your marketing site, blog posts, documentation, press releases, case studies, white papers, FAQ pages, and knowledge bases. Crawled web content establishes your domain expertise and entity disambiguation (how AI models differentiate your brand from competitors and associate your name with specific capabilities, use cases, and industries).

Industry-specific examples of crawled web content include:

- SaaS and software: API documentation, integration guides, feature roadmaps

- Professional services: thought leadership, methodology frameworks, industry expertise

- Manufacturing: technical datasheets, material specifications, compliance certifications

- Distribution and wholesale: product catalogues, supplier networks, regional availability

- B2B services: service offerings, geographic coverage, industry specialisations

Surface 2: Feeds and APIs

Feeds and APIs are structured data endpoints that deliver synchronised, machine-readable information. Rather than tracking retail inventory quantities, B2B feeds communicate capabilities, availability, pricing models, and technical specifications that AI systems can retrieve and cite with confidence.

Depending on your business type, feeds and APIs may include:

- Pricing APIs with plans, billing intervals, feature matrices, and usage limits

- Status APIs providing uptime statistics, incident history, and SLA commitments

- Changelog feeds documenting new features, deprecations, and versioning

- Practitioner directories structured as Person schema with expertise data

- Product specification APIs delivering technical specs and material properties

- Certification feeds covering ISO approvals, regulatory compliance, and safety standards

- Service area APIs detailing geographic coverage, response times, and capacity

Surface 3: Live Site and In-App Experiences

This surface addresses the rise of agentic AI – autonomous systems that navigate websites and applications like human users. AI agents accessing your trial signup flows can assess friction points. Onboarding sequences reveal time-to-value. In-app help modals extract troubleshooting guidance. Settings panels map configuration complexity.

This surface increasingly influences AI recommendations in conversational contexts. When a buyer asks an AI platform “Which consulting firm has expertise in pharmaceutical regulatory compliance?” or “Can this equipment manufacturer ship to Southeast Asia?”, the AI agent’s ability to navigate your live site directly shapes its response.

Why Do AI Data Surfaces Matter for B2B Companies?

Traditional SEO optimises for one surface: the crawled web. AI visibility optimisation requires a coordinated strategy across all three data surfaces because AI systems use Retrieval-Augmented Generation (RAG) to ground their responses in multiple, verifiable sources.

AI Systems Build Confidence Through Triangulation

When an AI model finds consistent information about your brand across crawled content, structured feeds, and live site interactions, it assigns higher trust scores and increases citation likelihood. The Microsoft framework emphasises that synchronisation across surfaces is a primary ranking factor for AI visibility.

Multi-Surface Optimisation Improves Share of Model

A B2B company optimising only its blog and marketing pages (Surface 1) may achieve high organic traffic but low Citation Authority. AI systems increasingly weight fresh, structured data (Surface 2) and direct interaction evidence (Surface 3) when evaluating source trustworthiness.

Companies that optimise all three surfaces earn higher Share of Model (SoM) – the percentage of AI responses in which their brand is mentioned or cited for relevant queries in their category.

How AI Systems Use Multiple Surfaces in Practice

RAG systems do not rely on a single source when formulating responses. They perform multi-stage retrieval across all three surfaces.

- Initial retrieval: RAG systems search indexed content (Surface 1) for pages matching query intent.

- Verification retrieval: Systems check structured feeds (Surface 2) for authoritative data to verify claims from web content.

- Interaction validation: For queries involving user experience or usability, advanced AI agents simulate site interactions (Surface 3) to validate claims.

When all three surfaces provide consistent, complementary information, the RAG system assigns higher confidence scores. Confidence directly influences whether your brand receives a citation, a mention, or is excluded entirely.

Example: How AI Answers a Buyer’s Question

When ChatGPT answers “What are the best CI/CD tools for Python projects?”, it synthesises information across all three surfaces:

- Surface 1: blog comparisons, documentation quality, community tutorials

- Surface 2: pricing transparency, integration lists, platform support matrices

- Surface 3: signup friction, trial limitations, UI clarity observed via browsing agents

The same triangulation pattern applies across every B2B sector – from professional services firms being evaluated on practitioner directories and consultation flows, to manufacturers assessed on specification feeds and product configurators.

Why Multi-Surface Optimisation Improves Citation Outcomes

AI systems are designed to minimise hallucination risk. When they can corroborate information across multiple data surfaces, they treat that information as more reliable. This is not hypothetical – it reflects how modern RAG architectures function. The underlying principle is information triangulation: multiple independent sources corroborating the same fact increase confidence in that fact’s accuracy.

Pricing Information Example

Consider how multi-surface coverage changes AI behaviour for pricing queries:

- Single-surface (web only): AI finds pricing on your /pricing page but has no way to verify recency or accuracy.

- Multi-surface: AI finds pricing on your /pricing page, verifies it against your pricing feed with a recent dateModified timestamp, and observes the same pricing in your signup flow. Result: higher confidence and greater likelihood of citing specific pricing.

Service Capabilities Example

- Single-surface: AI reads service descriptions but cannot verify claims.

- Multi-surface: AI reads service descriptions, validates capabilities through structured service catalogue feeds, and observes proof points through case studies and testimonials. Result: higher likelihood of recommendation.

How to Optimise Each AI Data Surface

Optimising Surface 1: Crawled Web Content

The objective is to establish topical authority and entity recognition through high-quality, semantically rich public content.

- Ensure every page includes appropriate schema markup (TechArticle, Article, or FAQPage) with headline, author, datePublished, and dateModified fields.

- Use consistent H2 headings that function as standalone retrieval keys, such as “What is [Concept]?” or “How to Implement [Feature]”.

- Include DefinedTerm schema for proprietary concepts and product terminology.

- Structure FAQ pages with FAQPage schema and Question/Answer pairs.

- Build a pillar-and-cluster content architecture linking core topics to detailed subtopics.

- Use BreadcrumbList schema to expose content hierarchy to AI systems.

Common pitfall: Publishing content without dateModified timestamps. AI systems interpret missing or stale dates as signals of low freshness, reducing citation likelihood.

Optimising Surface 2: Feeds and APIs

The objective is to provide machine-readable, real-time data that AI systems can retrieve and cite with confidence.

Step 1: Identify your primary structured data. Determine what information customers and AI systems need from you in machine-readable form. Every B2B company has core data that should be structured: product or service specifications, pricing and availability, expertise and credentials, and performance and reliability metrics.

Step 2: Map to the right Schema.org types. Use the appropriate Schema.org types for your business model. SaaS companies should use SoftwareApplication and Offer schemas. Professional services firms should use Person and Service schemas. Manufacturers should use Product and PropertyValue schemas.

Step 3: Publish feeds with freshness signals. All feeds should include dateModified timestamps to signal recency. Update these timestamps whenever substantive information changes. AI systems use modification dates as trust signals.

Step 4: Declare feeds in llms.txt. Create a /llms.txt file at your domain root listing all structured feeds, including pricing feeds, product or service catalogues, team directories, location feeds, certification data, and news updates.

Common pitfall: Publishing feeds without CORS headers or with authentication requirements that block AI crawlers. Ensure feeds are publicly accessible or provide API keys to major AI platforms.

Optimising Surface 3: Live Site and In-App Experiences

The objective is to make your user interface navigable, interpretable, and trustworthy for AI agents.

- Use semantic HTML (form, label, button, nav, main, article) instead of generic div elements.

- Provide descriptive aria-label attributes for interactive elements.

- Avoid CAPTCHA or bot-blocking on quote request forms – use honeypot fields instead.

- Ensure forms submit without JavaScript errors through progressive enhancement.

- Use breadcrumb trails and expose key pages in clean URL structures.

- Provide structured ContactPoint schema for phone, email, and chat channels.

- Use IP-based rate limiting instead of blanket bot blocks on user-agent strings.

AI Agent Accessibility: Blocked vs Optimised Patterns

| Blocked Pattern | Optimised Pattern |

| CAPTCHA walls on every form | Honeypot fields for bot detection |

| Generic div elements with no semantic meaning | Semantic HTML: form, label, nav, main, article |

| JavaScript-only rendering with no fallback | Server-side rendering or progressive enhancement |

| Bot-blocking user-agent filters | IP-based rate limiting |

| Login required before any information is visible | Key information visible before login |

Cross-Surface Consistency

Contradictions between surfaces degrade Trust Signals and reduce Citation Authority. For example, if your website claims 24/7 support but your structured contact feed shows limited hours, AI systems may deprioritise your brand in recommendations requiring always-available support.

Ensure the following information is synchronised across all three surfaces:

- Company name, brand entity, and legal information

- Contact details including phone, email, and addresses

- Geographic coverage and service areas

- Product or service names, versions, and specifications

- Pricing, plan names, and feature availability

- Certifications, compliance status, and credentials

Evidence and Data

Microsoft’s Three-Surface Framework

Microsoft Advertising’s AEO and GEO guide (2024) establishes several key principles for AI visibility across B2B contexts:

- Synchronisation across surfaces: AI systems prioritise sources where information is consistent and up to date across crawled content, feeds, and live site interactions.

- Freshness as a ranking signal: Dynamic data sources with dateModified timestamps signal recency and reliability to RAG systems. AI systems preferentially retrieve from sources with recent modification timestamps.

- Verified data reduces hallucination: Structured feeds with explicit schema markup enable AI systems to ground responses in authoritative sources, reducing misattribution.

- Reviews and third-party mentions: Review volume, verified customer testimonials, and third-party mentions act as trust multipliers across all three surfaces.

For B2B companies, third-party trust signals include G2, Capterra, and TrustRadius reviews for software companies; Clutch reviews and client testimonials for professional services; industry certifications and supplier ratings for manufacturers; and customer references and performance scorecards for service providers.

CiteCompass Perspective

CiteCompass provides AI visibility monitoring and optimisation across all three data surfaces, enabling B2B companies to understand and improve their Share of Model and Citation Authority.

Surface 1: Crawled Web Monitoring

CiteCompass tracks how AI systems retrieve and cite your public content, identifying which pages are cited most frequently, where citations are attributed versus unattributed, and content gaps where competitors are cited instead. CiteCompass Professional Services identifies schema validation errors that reduce RAG retrieval likelihood.

Surface 2: Feed Health and Validation

CiteCompass Professional Services validates structured feeds for schema compliance and completeness, freshness signals (dateModified recency), CORS configuration and crawler accessibility, and data consistency between feeds and web content.

Surface 3: Agent Accessibility Testing

CiteCompass Professional Services uses AI agent simulations to evaluate form navigability and friction points, information architecture and discoverability, interactive tool comprehensibility, and contact and conversion flow accessibility.

The three-surface framework reflects the actual mechanisms AI systems use to build brand understanding. CiteCompass does not replace your content management, developer tools, or analytics platforms. It complements them by measuring AI perception of your brand, enabling you to prioritise optimisation efforts based on actual AI citation behaviour rather than assumptions about what AI systems value. Learn more about the AI Visibility Suite.

What Changed Recently

- 2026-02: AI Data Surfaces page broadened from B2B SaaS to all B2B companies with industry-specific examples

- 2026-01: Microsoft Advertising published the AEO and GEO guide establishing the three-surface framework

- 2025 Q4: Google AI Overviews began prioritising sources with synchronised feeds and web content

- 2025 Q4: ChatGPT introduced browsing agents capable of navigating trial signups and product demos

- 2025 Q3: Schema.org added SoftwareApplication extensions for SaaS-specific metadata

Explore the Six Pillars of AI Visibility

The CiteCompass Knowledge Hub organises AI visibility optimisation into six interconnected pillars:

- Core Frameworks: GEO, AEO, RAG, and how AI systems rank and cite sources

- E-E-A-T and Trust Signals: entity disambiguation, author authority, and content integrity

- Optimisation Metrics: Citation Authority, Share of Model, Entity Confidence Score

- Technical Implementation: schema markup, JSON-LD, llms.txt, and AI crawler accessibility

- Content Strategy: original research, FAQ structures, and evergreen topic selection

- Market Intelligence: competitive citation patterns, topic gaps, and Share of Model benchmarking

References

1. Microsoft Advertising. (2024). From Discovery to Influence: A Guide to AEO and GEO. Microsoft Corporation. https://about.ads.microsoft.com/en/blog/post/january-2025/from-discovery-to-influence-a-guide-to-aeo-and-geo

2. Google Search Central. (2024). Understand how structured data works. https://developers.google.com/search/docs/appearance/structured-data/intro-structured-data

3. Schema.org. (2024). Full Hierarchy. https://schema.org/docs/full.html